Simultaneous Localization and Mapping (SLAM)

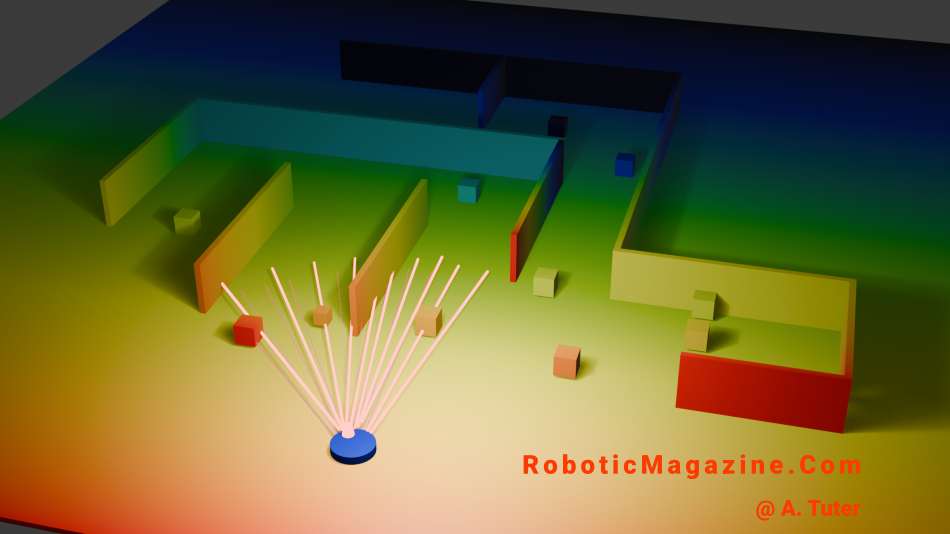

Simultaneous Localization and Mapping (SLAM) is a core technology in robotics that allows a machine to build a map of an unknown environment while simultaneously determining its own position within that map. This capability is essential for robots operating in places where GPS is unavailable, such as indoors, deep underground, or within complex warehouse layouts. To function effectively, SLAM combines data from various sensors, including LiDAR, cameras, Inertial Measurement Units (IMUs), and wheel encoders. As the robot moves, the algorithm estimates its motion, detects environmental landmarks, and continuously updates both the map and the robot’s estimated trajectory.

There are several mathematical approaches used to solve the SLAM problem, each with unique strengths:

- Extended Kalman Filter (EKF-SLAM): Uses probabilistic estimation to track the robot and landmarks. While effective for smaller areas, it becomes computationally expensive (O(N^2) complexity) as the map grows larger.

- Particle Filter (FastSLAM): Represents multiple possible robot positions simultaneously using “particles,” evaluating which ones best match real-world sensor observations.

- Graph-based SLAM: The modern standard that treats robot poses and sensor measurements as nodes and edges in a graph, which is then mathematically optimized to minimize error.

- Visual SLAM (V-SLAM): Relies primarily on cameras to identify visual features rather than using laser-based distance sensors.

Despite its success, SLAM remains challenging due to sensor noise, dynamic environments with moving objects, and the high demand for real-time computation. A critical component in overcoming these challenges is loop closure —the ability of a robot to recognize a previously visited location. When a loop is closed, the system can correct the “drift” or accumulated position errors that naturally occur over long distances, effectively snapping the map back into proper alignment.

Today, SLAM is a foundational element in a wide range of applications. It powers autonomous vacuum cleaners, high-altitude drones, warehouse automation robots, and self-driving vehicles, while also serving as the backbone for spatial tracking in augmented reality (AR) systems.